First Production Block Wrapped Up!

Hey guys! Product owner & team lead Riko here. I’m here to let all of you know that we now have wrapped up our first block of production! Whoohoo!

Watch out, Unity! Project Sulphur is coming to get ya!

Our first block of production has just been wrapped up. That is 8 weeks of production, 8 weeks of blood, sweat and tears - but it has been very much worth it. We have achieved a lot of things over the course of the first production block, and I’d like to walk you through a few of the highlights. So get ready, sit tight and grab a coffee, because we got a lot of ground to cover.

First demo game build with Project Sulphur!

I want to kick things off with the fact that we have build our first little “game” in Project Sulphur. You can download it from here! The game showcases all the basics of the engine coming together: physics, scripting, rendering, input, core engine, etc!

Woo, such demo game!

Open sourcing & Online documentation

Project Sulphur is open source now - with appropriate documentation available right here on this website! The open source version of the engine isn’t quite the same version that we use internally. That all has to do with the fact that we support not only PC, but also PS4. We built an in-house tool called inp4git that helps us create two separate versions of the code base: one that is open-sourceable, and one that is not.

Either way, you can check out the source code for Project Sulphur on our GitHub and the accompanying documentation right here on this very website.

Sulphur Editor

As you may remember from last week’s blog post, the first resemblances of an editor are now in place! Our tools programmers have done a phenomenal job over the last 8 weeks, resulting in an actual editor. While it is still very barebones, it does have a lot of the core functionality inside of it. We got a game view inside the editor, an asset browser to easily import your assets, and you can even spawn your models in the world already.

Sulphur Editor in Action!

Check out this blog post for a more detailed summary of the Sulphur Editor & supporting tools!

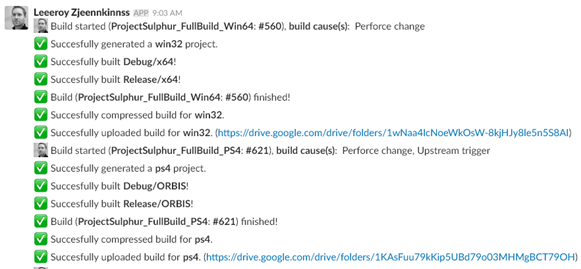

Build Pipelines

Internally we have setup an automated build pipeline that lets us know at all times whether we have a working build for Project Sulphur or not. It’s setup using a build server that is provided by our university (Thanks NHTV!) which runs Jenkins. Whenever we make a change to our code base, the project gets compiled for all our build targets. All the builds that get created get put on our Google Drive, and it automatically notifies our team on Slack whether the builds succeeded or failed.

Build reporting on Slack!

You can checkout this blog post for more info on our build pipelines and tooling!

Identical Rendering on PC and PS4

We now have all the base functionality for rendering in the project, allowing us to achieve identical rendering on both PC and PS4. On PC, we’ve implemented DirectX 12 and on PS4 we have implemented GNM. Both of these are hidden behind an abstraction layer, allowing us to simply send instructions to the abstraction layer while the implementation underneath does all the difficult things like interacting with the APIs. Basically, this means we have the most of the core functionality we need for implementing all the cool rendering stuff we have coming up throughout the rest of the year.

Identical rendering on PC and PS4!

Shader Based Materials

This is a pretty major thing in terms of rendering. We have implemented a system that allows us to create shader based materials. This is pretty much industry-standard and very much a “core” part of the rendering pipeline, so we’ve spent time creating the first iteration of this system in our engine. Don’t know what shader based materials are? Materials by themselves allow you to define the look of a mesh when it gets rendered. The “Shader Based” part means that the input parameters of the material (such as albedo maps, roughness maps, etc.) are defined by a shader that is attached to the material. It allows you to create custom shaders and use those as materials for your artists to play around with. So: endless possibilities with materials!

Two of the same spheres, with two different (shader based) materials!

Cameras & Multiple Render Targets

Of course, a game engine can’t go without supporting cameras. You can now attach a CameraComponent to any of your entities - whoohoo, more essential base functionality! One more important thing to mention is that the cameras now also support multiple render targets. Basically, you can make the camera render into more than just a single texture (as the default behaviour is). This is useful for many different rendering algorithms, such as deferred lighting or any screen space post processing effect (SSAO, SSR, FXAA, etc.).

Cup rendered by two cameras inside of debug spheres. One static camera, one dynamic camera. In the bottom right, you see the rendering output from the dynamic camera.

Debug Rendering & ImGui

We also now have support for rendering debug primitives, which is useful for, well... Debugging. The debug rendering system allows you to render lines, spheres, cubes, cones, etc., etc... Next to that, we have also created our own implementation layers for ImGui. ImGui is a very powerful, lightweight debug rendering library. At very little development cost, we’re able to render all sorts of debug windows, menus and visuals.

ImGui!

Rigidbodies, Colliders & Raycasting with Bullet Physics

Throughout the block there have been some struggles on the physics team, but we pulled through in the end. We have integrated Bullet physics into the engine and placed it behind an abstraction layer, just like our rendering system approach. We now have rigidbodies, basic primitive colliders and simple raycasting systems exposed through this abstraction layer.

Sulphur sulphur sulphur sulphur sulphur...

Networking ft. Enet

Another thing that is very important for the engine: networking capabilities! Internally we are using Enet as our networking library. Enet however doesn’t have support for PS4, so we modified Enet’s source code so that it compiles & works for PS4. Our networking capabilities are mostly geared towards making it possible for the engine to connect to the editor, but we’re also planning on exposing the networking systems to the end-developer, although the exact details for this still have to be thought through.

Three applications, one host, two clients. Boom.

Lua Scripting

As you should know from this blog post back in October 2017 by Daniël and Rodi, we have picked Lua as our weapon of choice for all scripting purposes in our engine. Now that we have finished up our first 8 weeks of production, I’m happy to say that we have a lot of the functionality for scripting in-engine now. We’ve set up the first iteration of the pipeline for exposing our C++-sided systems to Lua. We’ve exposed a few things already to the Lua scripting environment, such as the asset pipeline, ECS, transforms, input, rigidbodies, etc. We have big plans coming up for scripting, so stay tuned for that!

Other things we did

- Entity Component System

- Asset Management in Engine

- Job System (single threaded only so far)

- Custom cross platform memory allocators

- Coding structure guides & code style guides

- Doxygen for documentation

- Code review processes

- Switched from Pivotal to JIRA

- Many, many more things...

Wrapping up this blog post

It’s time to wrap up this blog post, as it’s getting quite lengthy already. Overall, we have made a ton of progress this block. We’ve gotten massive amounts of positive feedback, our supervisors are very happy with our project and we’ve even pitched the project to Ubisoft.

I think you could say I’m very happy with the way things are going.

You can expect another blog post somewhere between now and February 23rd about what the future for Project Sulphur looks like.

Stay tuned!

Riko out.